Hot-Swap Models

Mid-Conversation

Don't be locked into one brain. Start with a fast model for brainstorming and switch to a high-reasoning model for complex coding or analysis without losing context.

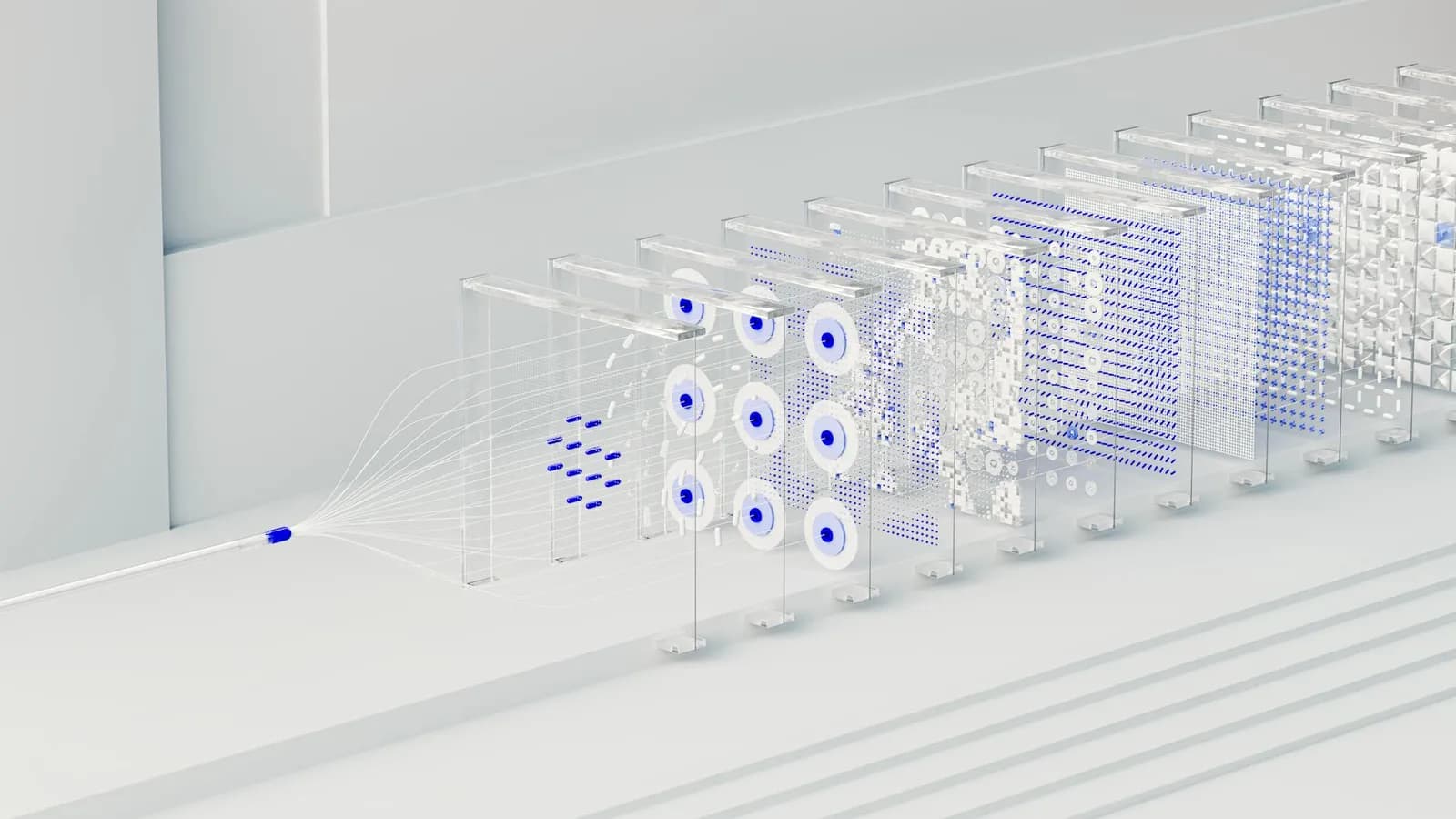

How it works

AuraAI maintains your conversation state across different model architectures seamlessly.

Context Preservation

Switching models doesn't mean starting over. We re-inject your history into the new model's context window automatically.

Multi-Model Chains

Use different models for different steps of a single task. GPT-5.4 for strategy, Claude 4.6 for writing, Gemini 3.1 for data.

Cost Efficiency

Start with lightweight models for simple questions and only 'upgrade' to heavy-lifting models when necessary.

Privacy First

Your data is encrypted regardless of which provider is currently processing your request.

Native Latency

Direct integration with OpenAI, Anthropic, and Google Cloud ensuring minimal overhead.

One Thread, Multiple Minds

Imagine asking for a complex code architecture using GPT-5.4, and then asking Claude 4.6 to review that same code for security vulnerabilities, all within the same chat bubble.